Beyond the Click: A 2026 Deep-Dive on GEO, Zero-Click Search, and How AI Chooses What to Say About You

Search still matters in 2026, but clicks matter less.

You can show up in an AI answer and get zero visits.

You can also lose visibility even if you “rank”.

This post breaks down what’s changing, why it’s happening, and what you can do about it with Generative Engine Optimization (GEO).

You’ll get a practical view of how AI systems select sources, how zero-click behaviour reshapes measurement, and what “AI-ready” content looks like for Irish local intent.

What changed: answers now sit in front of results

You no longer search and then choose a link.

You increasingly search and receive a composed answer.

That answer might appear in:

- Google AI Overviews and other enriched result blocks

- Chat-based experiences like ChatGPT, Copilot, or Perplexity

- Voice assistants and in-car systems that speak a single recommendation

- “Agent” features that book, compare, summarise, and decide for you

This matters because the “winner” is not always the top blue link.

The winner is the source the model trusts enough to quote, paraphrase, or use as a backbone.

That’s the shift GEO is trying to address.

Takeaway you can apply: start thinking in “answer share”, not “rank position”.

GEO in plain terms: you’re optimising for being used, not visited

Generative Engine Optimization (GEO) is the practice of shaping your content so a generative system can:

- Understand it quickly

- Trust it enough to rely on it

- Reuse it safely inside an answer

Traditional SEO asked: “How do I rank for keywords?”

GEO asks: “How do I become the source that explains the topic?”

That means your job changes from attracting clicks to supplying reliable facts.

It also changes what “success” looks like.

Success might be:

- Your brand name appears in answers for specific queries

- Your business details match across sources and get used in local summaries

- Your pricing, availability, or service coverage becomes the default reference point

Takeaway you can apply: pick 10–20 high-intent questions and aim to be the clearest source for each.

Zero-click search isn’t new, but AI makes it structural

Zero-click searches happen when the user gets what they need without visiting a site.

That includes:

- Map packs and business cards

- Featured snippets and “People also ask”

- AI summaries that synthesise multiple sources

- Knowledge panels and entity cards

In 2026, AI increases zero-click behaviour because the experience is “complete”.

It reduces the need to open tabs.

It also reduces the user’s patience for vague content.

If the AI summary answers the question, you do not get the session.

That does not automatically mean you lost the customer.

But it does mean your analytics can under-report your influence.

What changes for measurement

You need to separate:

- Demand capture (you got the click)

- Demand influence (you were mentioned, cited, or used)

Classic dashboards focus on capture.

AI search forces you to track influence too, even if imperfectly.

Practical signals you can track in 2026

- Branded search volume trends

- Direct traffic and “dark” traffic changes (no referrer)

- Query patterns in Search Console (where available)

- Call volume and direction requests that rise while sessions fall

- Mentions in AI answers via manual sampling and monitoring tools

Takeaway you can apply: build a lightweight “AI visibility log” where you test your top queries weekly and note who gets mentioned.

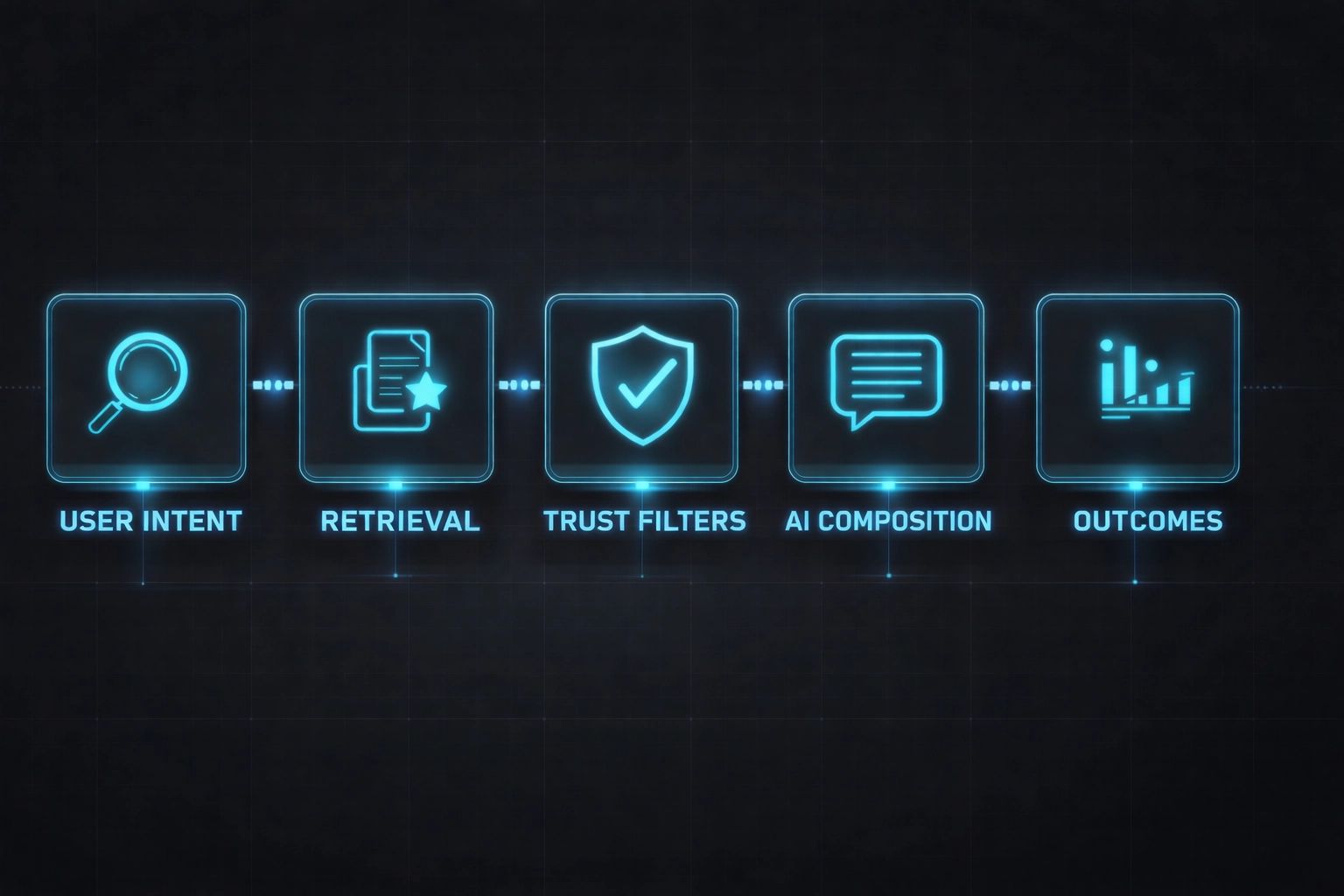

Visualizing the 2026 AI Search Pipeline

Most modern AI answer systems follow a pattern.

The visual below shows the flow in five stages, from the question you type to the outcome you actually see.

- User intent starts with the wording and constraints in the query, like “near me”, “cost”, “open now”, or “best for”.

- Retrieval is where the system pulls possible sources, like website pages, listings, and reviews, plus other factual data it can access.

- Trust filters narrow those sources down by checking consistency, entity signals, freshness, and whether multiple sources support the same point.

- AI composition is when the system writes the response, turning what it found into summaries, comparisons, and shortlists.

- Outcomes are what you can observe after the answer appears, like citations or mentions, increases in branded searches, and sometimes a click.

Different products vary in how much they “ground” the answer.

Some lean heavily on live retrieval.

Some rely more on internal model memory plus selective browsing.

Either way, the content that wins tends to share the same traits:

- Clear factual statements

- Consistent entity signals

- Strong corroboration from other sources

- Low ambiguity

- Good coverage of the topic’s sub-questions

Takeaway you can apply: treat your key pages like reference sheets, not sales pages.

What AI systems look for when deciding who gets cited

AI systems do not “love your brand”.

They prefer sources that reduce risk.

In practice, many systems reward the same underlying signals.

1) Authority: strong expertise signals without hype

Authority in AI search is less about bold claims.

It’s more about being consistently correct.

Signals that help:

- Named authors or organisations with a clear track record

- Pages that cite primary sources or official standards

- Content that matches what other credible sources also say

Signals that hurt:

- Vague “best in Ireland” language with no proof

- Contradictions across pages

- Outdated details (pricing, hours, service areas)

Next step: audit your top 20 pages for claims that have no supporting facts.

2) Clarity: structured writing beats clever writing

AI systems compress information.

If your writing is dense, the model has to guess what matters.

Clear writing gives the model stable building blocks.

What “clear” looks like:

- Short paragraphs

- Specific headings that mirror questions

- Lists, steps, tables, and definitions

- Direct answers near the top of the section

Next step: add one-sentence answers under your key headings before the detail.

3) Entity recognition: the system must know “who is who”

Entities are “things with identity”.

For local business search, common entities include:

- A business name

- A category (e.g., “accountant”, “physio”, “electrician”)

- A location (town, county, neighbourhood)

- People (owners, practitioners)

- Services and products

- Certifications, memberships, and brands you work with

When AI can link your site to a consistent entity across the web, it becomes safer to use.

When it can’t, it avoids naming you.

Next step: make sure your business name, address, phone, and service area are consistent across your site and major directories.

Structured data in AI search: why schema still matters in 2026

Structured data is code that labels your content.

It does not “force” an AI to cite you.

But it reduces confusion.

It helps systems extract facts reliably.

What structured data does in practice

- Clarifies your business type and location

- Identifies services, prices, and coverage areas

- Distinguishes FAQs from marketing copy

- Supports eligibility for rich results in some environments

Key schema types that tend to matter for local and service businesses

-

'LocalBusiness'(and the most specific subtype you can use) -

'Organization'(for brand identity and sameAs links) -

'Person'(for practitioners and authors) -

'Service'(for service definitions and areas served) -

'FAQPage'(only where you truly provide Q&A) -

'Review'/ 'AggregateRating'(only when compliant and accurate) -

'Article'(for authored informational content)

What good schema looks like

Good schema matches visible page content.

It includes stable identifiers:

- Official business name

- Address and geo coordinates where relevant

- Opening hours

- Same-as links that confirm identity (trusted profiles)

Bad schema usually fails in predictable ways, and AI systems treat it as a reliability problem.

Two common examples:

- Review mismatch: you mark up one set of generic reviews site-wide (or on pages that don’t match what the review is actually about). When the review content doesn’t align with the specific service/page context, many systems will ignore the markup, and you can lose eligibility for rich results or have them stripped over time.

- Identity confusion: your schema claims one thing while your visible text says another (for example, schema says you’re a “Dentist” but the page clearly describes a “Physiotherapist”, or your '

LocalBusiness'subtype contradicts your service descriptions). That contradiction makes your entity signals unstable, and you can get excluded from answer pools because the system can’t trust what you are.

Takeaway you can apply: add schema that improves accuracy, not schema that inflates claims.

Entity relationships: how AI connects your business to topics and intent

AI answers often rely on networks of meaning.

That includes relationships like:

- Business → offers → service

- Service → solves → problem

- Service → applies to → customer type

- Business → located in → town/county

- Business → serves → area

- Business → associated with → certification/association

- Business → has sentiment → review themes

If you never state these relationships clearly, the model infers them.

Inference is risky, so you may get excluded.

How to express relationships on-page

Use simple, explicit statements:

- “You can book emergency call-outs in Galway city and nearby suburbs.”

- “You get VAT returns and payroll support for small retail businesses.”

- “You’ll work with a CORU-registered therapist.”

- “You can choose weekend appointments in Dublin 8 and Dublin 6.”

Then support them with:

- Service area pages or a clear coverage section

- FAQs that match real questions

- Policies and process pages (how booking works, refunds, timelines)

Takeaway you can apply: write one “relationship paragraph” per key service that states who it’s for, where it applies, and what outcome it delivers.

Sentiment analysis: why reviews and wording shape AI recommendations

AI systems increasingly summarise “what people think”.

That is sentiment analysis in action.

It’s not just star ratings.

It’s themes, consistency, and language.

Common sentiment themes AI can extract:

- Speed and reliability (“on time”, “quick turnaround”)

- Communication (“kept me updated”, “clear pricing”)

- Quality (“fixed first time”, “attention to detail”)

- Fairness (“no hidden costs”)

- Trust (“honest”, “explained options”)

Why this affects generative answers

When a user asks:

- “Who is reliable?”

- “What’s a good option for families?”

- “Is there someone who explains things clearly?”

The system needs evidence.

Reviews and testimonials provide that evidence at scale.

How to make sentiment signals easier to use (without faking anything)

- Ask customers for specific feedback prompts (speed, clarity, outcome)

- Reply to reviews with factual detail (service type, area, timeframe)

- Keep service descriptions consistent with what reviews praise

Takeaway you can apply: identify the top 3 themes you want to be known for, then make sure your reviews and on-page copy reflect them naturally.

AI search trends for 2026 that change how you should write and structure content

Trend 1: “Best” queries are turning into constraint queries

Users now ask:

- “Best X under €Y”

- “Best X open now near me”

- “Best X for a specific problem”

AI answers handle constraints well when the source provides structured facts.

Next step: publish pages that include constraints you can genuinely satisfy (pricing bands, areas, lead times, appointment types).

Trend 2: Multimodal search is normal

Users search with screenshots, photos, and voice.

That pushes you to provide:

- Clear image alt text and captions

- Visual proof (before/after where relevant and ethical)

- Plain-language explanations that work well in voice summaries

Next step: add captions that state what the user is looking at and why it matters.

Trend 3: “Agentic” behaviour reduces browsing

Booking, comparing, and shortlisting happens inside the tool.

So your content needs to answer operational questions:

- Availability

- Process

- Geography

- Pricing logic

- What happens next

Next step: add a “How it works” section to your key service pages with 5–7 steps.

Trend 4: Source diversity is shrinking in some answers

Many AI answers rely on a small set of frequently used sources.

That raises the bar for everyone else.

To compete, you need:

- Unique data or clear original framing

- High consistency across the web

- Strong entity identity

Next step: create one “definitive guide” page per core service that becomes the reference.

A practical 2026 GEO playbook for Irish businesses

This is not a checklist you complete once.

It’s a system you maintain.

1) Choose your “answer set”

Include:

- “Near me” and town/county variations

- “Cost” and “how long does it take”

- “Is X worth it” or “do I need X”

- “X vs Y” comparisons

- Compliance and Irish-specific rules where relevant

Next step: write the exact questions in your customer’s language, not your industry language.

2) Build one pillar page that maps the topic

A pillar page should:

- Define the topic

- Cover the main sub-topics in a logical flow

- Link to deeper pages that answer each sub-topic fully

- Provide concise, quotable sections

You’re reading a pillar-style structure right now.

Next step: map your content like a table of contents, then fill gaps.

3) Create “supporting pages” that are narrow and factual

Supporting pages win when they are specific.

Examples:

- “Emergency electrician in Tallaght: response times, call-out fees, what to do first”

- “Payroll for small cafes: what’s included, timeline, documents needed”

- “Physio for runners: assessment steps, expected plan length”

Next step: pick one niche use case per service and write a page that answers it end-to-end.

4) Make your local intent explicit

Don’t assume AI will infer Irish context.

State it:

- Currency in euros

- Irish regulations, agencies, and standards (only where accurate)

- County and town coverage

- Irish wording users actually use (“county”, “eircode”, “GP referral”, “NCT”, etc., where relevant)

Next step: add a “Service area” and “Pricing in Ireland” section where it naturally fits.

5) Keep facts updated like you would a product catalogue

AI answers degrade when details drift.

You need a cadence.

A simple pattern:

- Monthly: update prices, lead times, availability, and seasonal notes

- Quarterly: update FAQs, policies, and screenshots

- Biannually: refresh the pillar page and internal links

Next step: add “last reviewed” dates to pages that change often.

Unanswered questions: how do AI models prioritise Irish local intent?

AI search is improving fast, but Irish local intent still raises open questions.

These are practical uncertainties you should plan around.

1) How strongly do models weight Irish spelling, phrasing, and place names?

Irish place names can be ambiguous.

Some have Irish-language versions, English versions, and nicknames.

It’s unclear how consistently models unify:

- “Dún Laoghaire” vs “Dun Laoghaire”

- “Derry” vs “Londonderry” (and cross-border intent)

- Townlands and local neighbourhood names that aren’t well mapped

What you can do now: include common variants naturally on service area pages and in FAQs.

2) What is the “tie-breaker” for local recommendations in smaller towns?

In dense cities, there are many sources.

In smaller towns, the system may lean heavily on:

- A handful of directories

- Review volume

- Proximity signals

- Category fit (how you’re labelled)

We still don’t have transparent weighting.

What you can do now: tighten your category selection, service descriptions, and review themes so the model has fewer reasons to choose someone else.

3) How do models interpret Eircodes in local relevance?

Eircodes can be precise.

But user behaviour varies.

Some users type an Eircode, some type a town, and some say “near me”.

It’s not fully clear how different AI systems use Eircodes in ranking and answer composition.

What you can do now: add Eircode support where it helps users (contact pages, service areas), and ensure it matches your listings.

4) How do Irish regulatory and pricing norms influence answer confidence?

For some sectors, users expect Irish-specific guidance.

Examples include:

- Tax and payroll rules

- Health and care credentials

- Building standards and safety requirements

If your content lacks Irish context, the model may prefer generic sources.

If your content includes Irish context but is wrong or vague, it may avoid you.

What you can do now: write short, factual “Ireland-specific notes” and keep them updated.

5) Do models treat local news and community sites as stronger evidence in Ireland?

In some markets, local publishers and community pages influence trust.

In Ireland, local coverage can be patchy by region.

It’s not clear when local news becomes a ranking advantage versus a neutral signal.

What you can do now: focus on consistency and clarity first, then diversify your citations where it’s natural.

Takeaway you can apply: assume the model is uncertain about Irish local nuances, and reduce that uncertainty with explicit, consistent details.

What “AI-ready” pages look like in 2026 (a quick self-check)

Use this as a fast audit.

Your key pages should make it easy to extract:

- Who you are (entity)

- Where you operate (service area)

- What you do (services)

- What it costs (ranges or rules, if you can share them)

- How it works (steps and timelines)

- Proof (reviews, credentials, examples)

- Limits (what you don’t do, so the system avoids misrepresenting you)

Takeaway you can apply: add a “Limits and exclusions” section to reduce misquotes and wrong referrals.

The bottom line: clicks are optional, clarity is not

AI search in 2026 rewards sources that are easy to interpret and hard to misunderstand.

That is the core of GEO.

If you make your facts explicit, keep your entity signals consistent, and structure your pages like reference material, you give AI systems fewer reasons to ignore you.

And in a zero-click world, being included in the answer is often the real visibility.